10 Real-World Examples of Autonomous AI Agents in 2025 — Outcomes, Failures & Lessons Learned

Introduction

Autonomous AI agents are no longer just hype. In 2025, they’re running inside businesses, automating workflows, making decisions, and collaborating with humans in high-stakes domains.

The problem? Most articles list “AI agent use cases” without showing real deployments, outcomes, or failures. In this guide, we look at 10 real-world examples of autonomous decision-making AI agents — including what worked, what broke, and how you can learn from them.

Read more about AI safety here

1. Salesforce Agentforce — Customer Service AI Agents

What it does:

Salesforce launched Agentforce to handle customer queries autonomously within its CRM ecosystem. The agents can interpret user intent, fetch knowledge, and resolve issues without human escalation.

Outcomes:

- Companies like Fisher & Paykel report high percentages of queries resolved by Agentforce.

- Businesses see faster average handling times and improved customer satisfaction.

Failure modes:

- Struggles with ambiguous or emotionally charged queries.

- Risks hallucinating unsupported answers if knowledge is poorly curated.

Guardrails & stack:

- Built on Salesforce Einstein LLMs.

- Integrated into CRM workflows with allow-lists.

- Human fallback when confidence is low.

Lesson: Autonomous service agents thrive on structured, repetitive queries — but always need a human safety net.

2. Careem + Oracle — Autonomous Invoice Processing

What it does:

Ride-hailing giant Careem partnered with Oracle to automate invoice processing. Agents extract data, validate against contracts, and trigger payments.

Outcomes:

- 70% reduction in invoice processing time, cutting workflows from days to hours.

- Faster vendor payments and reduced manual effort.

Failure modes:

- Struggles with unusual invoice formats.

- Potential fraud risk if malicious invoices bypass rules.

Guardrails & stack:

- Oracle ERP + AI services for document ingestion.

- OCR + NLP pipeline + decision rules.

- Tiered thresholds: high-value invoices require human approval.

Lesson: Finance agents deliver ROI quickly when exceptions are tightly governed.

3. Healthcare Triage Agents

What they do:

Hospitals and startups deploy agents to gather patient symptoms, suggest likely conditions, and summarize for doctors.

Outcomes:

- Clinical pilots show 15–20% clinician time savings in emergency triage.

- Faster throughput in patient intake.

Failure modes:

- Missing rare symptoms.

- Overconfidence in uncertain diagnoses.

Guardrails:

- Always positioned as decision support, never replacement.

- Multi-level validation and human oversight.

4. Developer Productivity Agents

What they do:

AI coding agents like xAI’s Grok-Code-Fast and GitHub Copilot Agents now go beyond autocomplete — they debug, run tests, and open pull requests.

Outcomes:

- Internal studies: 20–30% of new code authored with AI assistance.

- 25% faster bug fixes for common issues.

Failure modes:

- Struggle with legacy codebases.

- Risk of insecure code entering production.

Guardrails & stack:

- LLMs integrated with IDEs and GitHub Actions.

- Static analysis, CI/CD test enforcement.

- Human review before merge.

Lesson: Agents accelerate dev cycles, but CI/CD + human code review are mandatory.

5. Autonomous DevOps / AIOps Agents

What they do:

Monitor logs, detect anomalies, suggest fixes — and in some cases execute them.

Outcomes:

- 30% faster incident resolution in enterprise pilots.

- Reduced downtime for critical systems.

Failure modes:

- False positives = alert fatigue.

Guardrails:

- Shadow mode before live deployment.

- Human approval for remediation.

Read more about autonomous decision making AI here

6. Financial Research & Trading Agents

What they do:

Automate financial research, summarize filings, and recommend trades.

Outcomes:

- Faster research pipelines.

- Decision support for analysts.

Failure modes:

- Overfitting, biased signals, hallucinations.

Guardrails: Strictly kept as advisory — no autonomous execution.

7. Sales Outreach Agents

What they do:

Agents research prospects, personalize emails, and score leads.

Outcomes:

- 20–30% uplift in meetings booked in vendor case studies.

Failure modes:

- Spammy or generic personalization.

Guardrails:

- Rate limits.

- Human approval for high-value prospects.

8. Procurement Agents

What they do:

Process contracts, match purchase orders, and validate vendor data.

Outcomes:

- Hours saved per transaction.

- Lower error rates.

Failure modes:

- Struggle with exceptions.

Guardrails:

- Exception routing.

- Audit logging.

9. Security Response Agents

What they do:

Monitor systems, detect intrusions, and suggest containment steps.

Outcomes:

- Faster mean-time-to-detect (MTTD) in enterprise pilots.

Failure modes:

- Blocking legitimate users.

Guardrails:

- Shadow mode deployment.

- Tiered responses.

10. Personal Productivity Agents

What they do:

Assist individuals with email triage, scheduling, and prioritization.

Outcomes:

- Early adopters save 2–3 hours per week.

Failure modes:

- Scheduling conflicts.

Guardrails:

- Human override for final scheduling.

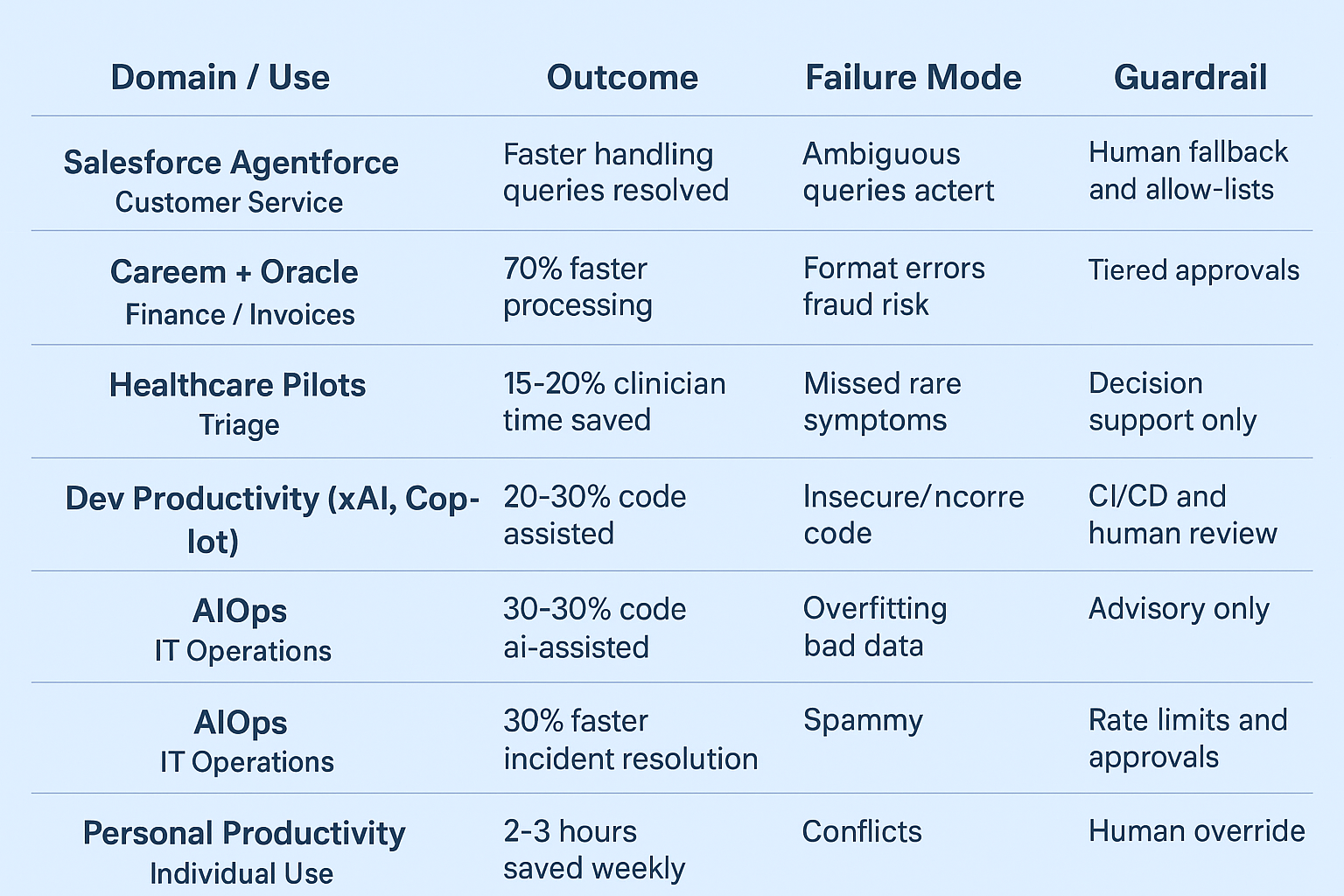

Comparison Table

| # | Example | Domain | Outcome | Failure Mode | Guardrail |

|---|

| 1 | Salesforce Agentforce | Customer service | Faster handling, more queries resolved | Ambiguous queries, hallucinations | Human fallback, allow-lists |

| 2 | Careem + Oracle | Finance / invoices | 70% faster processing | Format errors, fraud risk | Tiered approvals |

| 3 | Healthcare pilots | Triage | 15–20% clinician time saved | Missed rare symptoms | Decision support only |

| 4 | Dev productivity (xAI, Copilot) | Software dev | 20–30% code AI-assisted | Insecure/incorrect code | CI/CD, human review |

| 5 | AIOps | IT Ops | 30% faster incident resolution | False positives | Shadow mode, approvals |

| 6 | Finance trading | Research/trading | Faster research | Overfitting, bad data | Advisory only |

| 7 | Sales outreach | Sales | 20–30% more meetings | Spammy outreach | Rate limits, approvals |

| 8 | Procurement | Supply chain | Hours saved, fewer errors | Exception handling gaps | Exception routing |

| 9 | Security response | Cybersecurity | Faster MTTD | Legitimate blocks | Shadow mode, tiered |

| 10 | Personal productivity | Individual use | 2–3 hrs saved weekly | Conflicts | Human override |

Key Lessons

- Metrics matter: measure time saved, errors avoided, and cost per task.

- Failure is inevitable: hallucinations, loops, and over-automation occur everywhere.

- Guardrails save you: budget caps, human approval, and kill switches prevent damage.

- Shadow mode first: test before deploying to production.

- Hybrid wins: the best setups pair autonomy with human oversight.

Conclusion

Autonomous AI agents are no longer theoretical. They’re working today in customer service, finance, healthcare, and even software development.

But success doesn’t come from plugging in an LLM and hoping. It comes from operational discipline — observability, guardrails, and governance.

If you’re exploring agents, start small: deploy in shadow mode, measure outcomes, and always build in human fallback.

👉 Next read: AgentOps in 2025 — A Practical Runbook for templates, decision logs, and incident response checklists.

Want to see how neuroscience and AI are teaming up to reshape human habits in 2025? Read our deep dive here

Curious to learn more? Check out our newest blogs here

FAQs

Q1: What are real-world examples of autonomous AI agents?

Salesforce Agentforce in customer support, Careem’s invoice processing with Oracle, and GitHub Copilot Agents in software development are notable 2025 examples.

Q2: Can AI agents be trusted in finance?

Yes, but only as decision-support tools. Autonomous execution without human oversight is too risky.

Q3: How are AI agents used in healthcare?

Hospitals use them for patient triage, saving clinicians up to 20% of time, but always with human review.

Q4: What are the risks of AI agents?

Hallucinations, loops, misclassification, over-automation, and security vulnerabilities.

Q5: How do you safely deploy an AI agent?

Run in shadow mode first, add guardrails (approvals, caps, kill switches), and monitor logs.

Frequently Asked Questions

What is AgentOps in AI?

AgentOps is the operational practice of deploying, monitoring, testing, and governing autonomous AI agents. It covers structured logging (goals, plans, tool calls, decisions), guardrails, human-in-the-loop controls, observability dashboards, and incident runbooks.

Why do autonomous AI agents need monitoring?

Agents act autonomously and can loop, hallucinate, overspend, or take unsafe actions. Monitoring provides transparency, detects failures early, and enables audits and post-mortems so teams can fix and improve agents safely.

What guardrails should be in place for AI agents?

Effective guardrails include policy checks (pre/post action), allow-lists of tools/domains, budget and step caps, latency/time limits, and a kill-switch. Combine these with logging and human-approval thresholds for high-risk actions.

How do you test the safety of an AI agent?

Use a red-team approach: run adversarial prompt-injection tests, simulate API/tool failures, create loop-inducing tasks, and attempt permission escalations. Start in shadow mode and validate results before enabling live actions.

Which AI agent tasks should always require human approval?

High-risk actions—such as financial transactions, data deletion, public-facing communications, and any operation involving sensitive personal data—should require human review and approval before execution.

Pingback: Autonomous AI Agents: NEW WAY OF Decision-Making IN 2025 - ScienceThoughts

Pingback: Autonomous Decision-Making AI: The Intelligent Systems of 2025 - ScienceThoughts

Pingback: Everyday Finance, Automated: How Autonomous AI Agents Can Budget, Save and Protect Your Money in 2025 - ScienceThoughts

Pingback: AgentOps in 2025: The Complete Playbook to Monitor and Safely Control AI Agents - ScienceThoughts

Pingback: Beyond Accuracy : Top 7 Powerful Evaluation Metrics That Redefine Autonomous Decision Agents - ScienceThoughts