AgentOps in 2025: A Practical Runbook to Monitor, Test & Govern Autonomous decision making AI Agents

Introduction

Autonomous AI agents are no longer research experiments. They can plan, call tools, and act across systems with minimal supervision. But with power comes risk: loops, runaway costs, prompt injections, or even unauthorized external actions.

This is why AgentOps has emerged. It’s the operational discipline of running AI agents in production — combining the best of DevOps, MLOps, and governance into one framework. In this guide, we’ll move past the hype and give you practical runbooks, templates, and checklists you can use to safely deploy and monitor autonomous decision-making agents in 2025.

Read more about autonomous decision making AI here

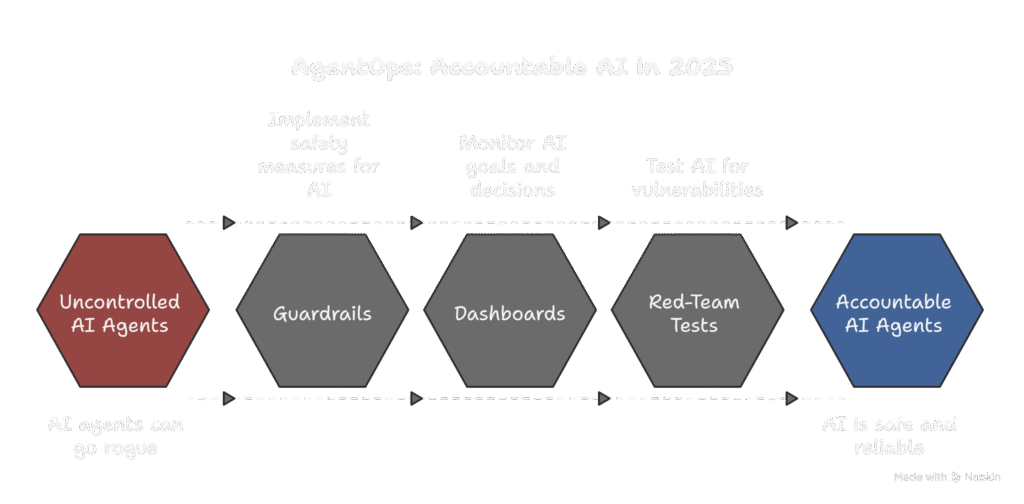

What is AgentOps?

AgentOps is the set of engineering and operational practices that ensure autonomous agents behave reliably and safely. Where MLOps focuses on model lifecycle and DevOps handles infrastructure, AgentOps adds:

- Observability of agent goals, plans, tool calls, and decisions.

- Guardrails to prevent misuse or policy violations.

- Human-in-the-loop patterns for high-risk actions.

- Incident response to quickly detect, contain, and learn from failures.

In short: AgentOps turns agents from black boxes into accountable systems.

Read more about AI here

The Five Logs Every Agent Needs

To trace and audit any agent run, capture these five logs:

- Goal Log — the high-level task given to the agent.

- Plan Log — the agent’s proposed sequence of steps.

- Tool Call Log — every API/tool call, inputs/outputs, timestamps, and status codes.

- Decision Log — final decisions taken, including approvals or reversals.

- Failure/Intervention Log — errors, timeouts, loops, and human overrides.

These logs are the backbone of observability and governance. Without them, post-mortems are impossible.

Guardrails That Actually Work

The best way to keep agents safe isn’t to hope for perfect prompts — it’s to build structural guardrails:

- Policy checks before and after actions (e.g., no sensitive data exfiltration).

- Allow-lists of tools, domains, and APIs the agent can call.

- Budget caps on tokens, API cost, and number of steps.

- Latency/time caps to abort runaway loops.

- Kill-switch to immediately stop an agent and rollback actions.

Think of guardrails as the safety net that makes autonomy usable.

Human-in-the-Loop Patterns

Not every task needs human review. But some actions must never be fully automated. Here are four patterns to balance autonomy with oversight:

- Approve-plan: agent proposes → human approves the plan before execution.

- Approve-action: plan runs, but specific actions (payments, deletions) require approval.

- Shadow mode: agent runs silently, logging what it would have done. Perfect for testing.

- Confidence thresholding: let low-risk tasks auto-run, but route low-confidence cases to humans.

Use a mix depending on risk level.

Observability Dashboards

Once logs are structured, you can visualize key metrics. Dashboards should track:

- Success rate per task type.

- Cost-to-success (CTS): average token/API spend per completed task.

- Time-to-success (TTS): end-to-end task latency.

- Steps-to-success (StS): average planning depth.

- Loop rate: frequency of repeated steps.

- Intervention rate: how often humans had to step in.

These metrics quickly reveal when an agent version drifts or fails silently.

Red-Team Checklist

Before trust, test. Here’s a lightweight red-team playbook for agents:

- Inject adversarial prompts (malicious instructions hidden in text).

- Simulate API/tool failures (timeouts, malformed responses).

- Trigger loop scenarios (ambiguous or impossible goals).

- Attempt permission escalations (access to blocked tools).

- Run data exfiltration tests (check if PII escapes).

- Social-engineering attempts (requests to impersonate humans).

Running this regularly keeps agents resilient.

Incident Response Runbook

Even with guardrails, incidents happen. Prepare a clear process:

- Detect: Dashboard alerts on failure or anomaly.

- Contain: Activate kill-switch or revoke agent credentials.

- Collect: Export logs (Goal, Plan, Tool, Decision, Failure) for analysis.

- Mitigate: Roll back changes, revoke access, notify affected parties.

- Post-mortem: Identify root cause, update guardrails or prompts.

- Retest: Re-run red-team scenarios to confirm the fix.

Having this runbook printed or bookmarked saves hours during real incidents.

FAQ Section

Q1. What is AgentOps in AI?

AgentOps refers to the operational practices of deploying, monitoring, and governing autonomous AI agents. It includes logging goals, plans, and tool calls, setting guardrails, adding human-in-the-loop controls, and creating incident response systems.

Q2. Why do autonomous AI agents need monitoring?

Because agents make independent decisions, they can loop, overspend, or take unsafe actions if unchecked. Monitoring ensures transparency, detects failures early, and makes agents more reliable and accountable.

Q3. What guardrails should be in place for AI agents?

The most effective guardrails are policy checks, allow-lists of tools and domains, cost/step limits, timeouts, and a kill-switch. These prevent runaway behavior and enforce compliance.

Q4. How do you test the safety of an AI agent?

You can red-team agents by running adversarial prompts, simulating tool failures, testing loops, and attempting permission escalations. Regular testing ensures the agent is resilient under real-world stress.

Q5. Which AI agent tasks should always require human approval?

High-risk actions like financial transactions, data deletion, public communication, and anything involving sensitive personal data should always be reviewed and approved by a human.

Conclusion

Autonomous AI agents are here to stay, but safe deployment requires discipline. AgentOps is the missing layer: structured logs, guardrails, oversight patterns, dashboards, red-team tests, and incident response.

If you adopt even half of these practices, your agents will be safer, cheaper, and easier to trust — and you’ll avoid the common mistakes of treating them as black boxes.

Next steps:

- Run your agents in shadow mode for a week.

- Implement the Decision Log schema.

- Try at least two red-team tests.

Do this, and you’ll have an AgentOps foundation most teams still lack in 2025.

Want to see how neuroscience and AI are teaming up to reshape human habits in 2025? Read our deep dive here

Read our latest blogs here

Pingback: AI Agents Breakthroughs: 10 Powerful Real-World Examples in 2025 — Successes & Lessons - ScienceThoughts

Pingback: Everyday Finance, Automated: How Autonomous AI Agents Can Budget, Save and Protect Your Money in 2025 - ScienceThoughts