Beyond Accuracy : Top 7 Powerful Evaluation Metrics That Redefine Autonomous Decision Agents

Introduction: Why Accuracy Isn’t Enough

In the age of autonomous decision-making agents, we’ve entered a world where AI doesn’t just predict — it acts. From financial trading bots and autonomous vehicles to intelligent process agents managing supply chains, these systems make real-world decisions with direct consequences.

Traditionally, the performance of AI models has been measured using one dominant metric — accuracy. But for decision-making agents, accuracy alone paints an incomplete picture. In complex, uncertain, and high-stakes environments, a “correct” prediction doesn’t always mean the best decision.

To truly understand and improve the performance of these agents, we need a broader evaluation framework — one that goes beyond correctness and measures adaptability, ethics, fairness, and long-term impact.

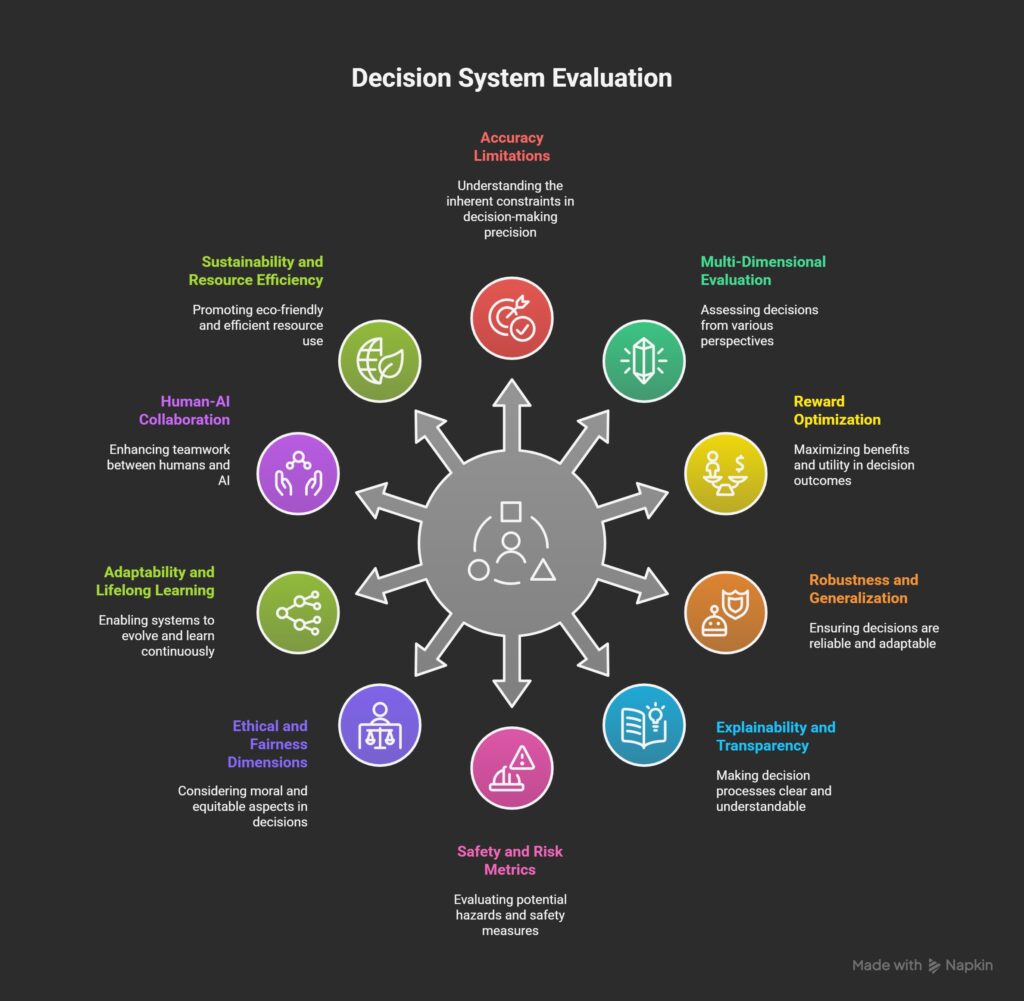

1. The Limitations of Accuracy in Decision Systems

Accuracy works well when the problem is static and well-defined — like classifying images or predicting spam emails. But autonomous agents operate in dynamic environments, often with incomplete data and evolving objectives.

For example:

- A self-driving car might avoid accidents (accurate), but if it drives too cautiously and blocks traffic, it fails in its utility goal.

- A trading agent might make short-term profitable trades (accurate predictions), but destabilize its portfolio in the long run.

These examples highlight a critical insight — accuracy doesn’t measure the quality of decisions or their contextual impact.

2. Moving Toward Multi-Dimensional Evaluation

Decision-making agents need a multi-dimensional evaluation approach — combining quantitative metrics (like reward optimization and efficiency) with qualitative ones (like ethical compliance and explainability).

3. Reward and Utility Optimization

For agents that learn through reinforcement learning or optimization algorithms, reward functions define what “success” means.

However, evaluating agents solely on cumulative reward can be misleading.

A better approach is to assess:

- Reward Efficiency – How much reward is achieved per action or per unit of resource.

- Reward Stability – Does the agent maintain consistent performance under changing conditions?

- Reward Fairness – Are rewards distributed equitably among stakeholders affected by the agent’s decisions?

In complex environments, multi-objective optimization (balancing efficiency, safety, and fairness) becomes essential.

4. Robustness and Generalization

An intelligent agent should perform reliably across diverse scenarios — not just in training or controlled simulations.

Metrics that capture robustness include:

- Domain Generalization Score: How well the agent adapts to new, unseen situations.

- Stress-Test Performance: Agent’s behavior under extreme or adversarial conditions.

- Variance of Outcome: How consistent its performance remains over repeated trials.

A robust agent isn’t just good at one task; it’s resilient when the rules of the game change.

5. Explainability and Transparency

Decision agents are increasingly being deployed in regulated and human-critical contexts (finance, healthcare, defense). Stakeholders need to understand why an agent made a certain decision.

Metrics here include:

- Explainability Index: How interpretable are the agent’s internal reasoning steps?

- Decision Traceability: Can we trace each decision to a specific input or state?

- Human Interpretability Score: How easily can a non-expert understand the rationale behind a decision?

Explainability isn’t just an ethical checkbox — it’s a trust-building mechanism between humans and machines.

6. Safety and Risk Metrics

A decision that is accurate but unsafe can be catastrophic in real-world applications.

For autonomous systems, safety evaluation should include:

- Risk Exposure Score: Probability and impact of catastrophic failures.

- Recovery Capability: How quickly the agent can return to safe operation after an error.

- Uncertainty Calibration: How well does the agent recognize and respond to its own uncertainty?

These metrics ensure that agents prioritize safe outcomes even when accuracy is compromised.

7. Ethical and Fairness Dimensions

Decision agents often operate in socially sensitive domains — hiring systems, lending algorithms, or medical diagnostics. Biases in data or logic can lead to unfair outcomes.

Fairness evaluation includes:

- Demographic Parity: Are decisions distributed equally across groups?

- Equalized Odds: Do different groups experience similar error rates?

- Long-Term Fairness: Does the agent’s policy amplify or reduce inequality over time?

Integrating ethical audits into performance evaluation ensures that autonomy does not come at the cost of justice.

8. Adaptability and Lifelong Learning

Unlike static AI models, autonomous agents continuously learn from their environment.

Key metrics here are:

- Learning Efficiency: How fast the agent improves with new experiences.

- Transfer Learning Score: Can it apply past knowledge to new but related tasks?

- Catastrophic Forgetting Index: How much previously learned information is lost over time?

The most valuable decision agents are those that evolve intelligently rather than rigidly following past patterns.

9. Human-AI Collaboration Metrics

In most real-world systems, agents don’t work alone — they collaborate with humans.

Evaluating synergy is just as important as measuring solo performance.

Possible metrics:

- Trust Calibration Score: Is the human’s trust level aligned with the agent’s true capability?

- Decision Overlap Index: How often do human and AI decisions agree?

- Collaborative Efficiency: Does AI assistance improve team outcomes without increasing cognitive load?

Agents that can understand and adapt to human intent are more effective and more readily accepted.

10. Sustainability and Resource Efficiency

In large-scale deployments, the cost of autonomy — in terms of energy, compute, or carbon footprint — also matters.

Metrics to consider:

- Energy-to-Performance Ratio: Energy consumption per successful decision or task.

- Carbon Cost per Episode: Environmental footprint of training and inference.

- Computational Scalability: Performance per hardware or cost unit.

Evaluating agents on sustainability ensures that progress is both intelligent and responsible.

Conclusion: The Future of Evaluating Decision Agents

As we move toward next-generation autonomous systems, evaluation metrics must evolve from narrow measures of accuracy to holistic frameworks of trust, adaptability, and impact.

Decision agents are not just predictive engines — they are cognitive collaborators influencing how humans and machines co-create decisions.

A truly “intelligent” agent will not only choose correctly but also choose wisely — safely, fairly, transparently, and sustainably.

In the near future, success won’t be defined by whether an AI is accurate — but by whether it is accountable.

Related topics –

- Shadow AI & Autonomous Decision-Making Agents in 2025: How the Invisible Minds are running Our Systems

- Deepfake Dangers in 2025: How to Spot, Prevent & Stay Protected

- AgentOps in 2025: The Complete Playbook to Monitor and Safely Control AI Agents

- AI Safety: What Every Reader should Know in 2025 (Risks, Examples, & How to Protect Yourself)

- 10 Real-World Examples of Autonomous AI Agents in 2025 — Outcomes, Failures & Lessons Learned

Pingback: How Tier-2 Cities Are Powering India’s AI Agent Revolution—Beyond the Metro Hype - ScienceThoughts