AgentOps in 2025: The Complete Playbook to Monitor and Safely Control AI Agents

Introduction: Why AgentOps Matters in 2025

By now, you’ve probably heard about AI agents somewhere — and that’s why you’re here, reading about them. The truth is, the world won’t pause for us to catch up. Technology keeps moving forward, and it’s on us to adapt and learn.

That’s where AI agents come in. They’re no longer futuristic concepts. Today, autonomous AI agents are already managing tasks like budgeting, customer support, research, and even business negotiations.

But with this growing autonomy comes a critical question: How do we monitor, trust, and control these digital assistants without losing visibility?

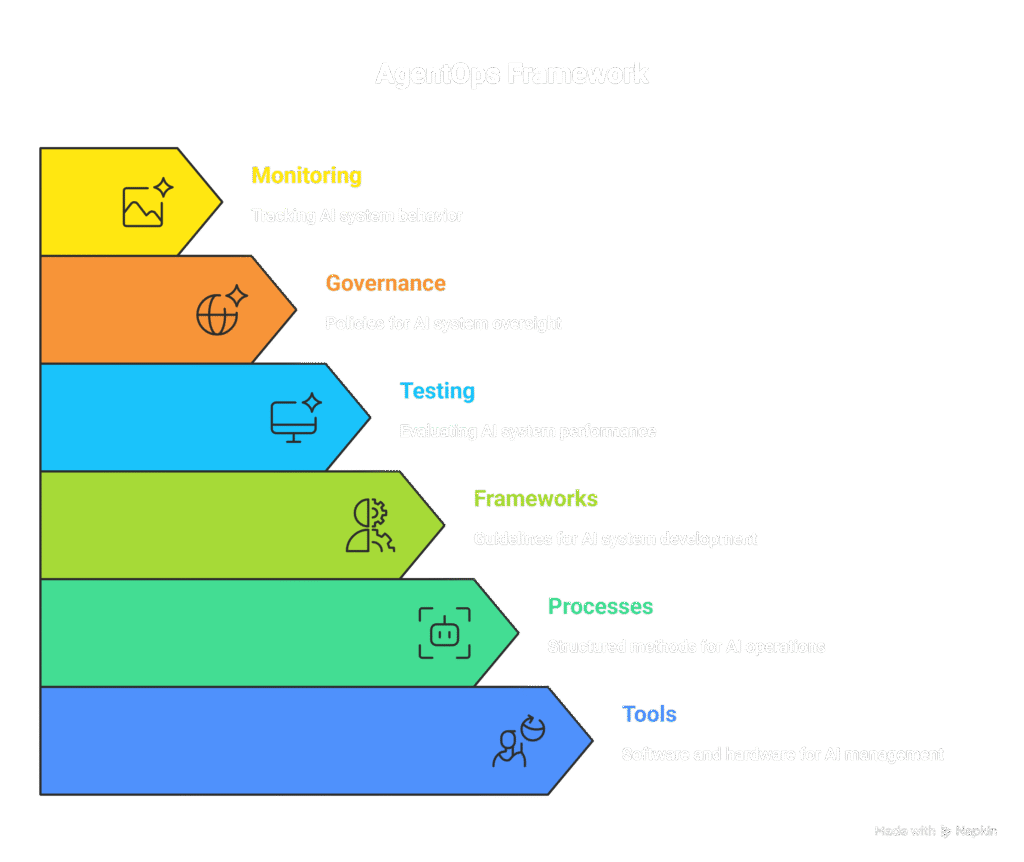

This is where AgentOps (Agent Operations) enters the scene. Originally borrowed from the DevOps playbook, AgentOps refers to the tools, processes, and frameworks used to test, govern, and monitor autonomous AI systems. In 2025, AgentOps is becoming essential not just for enterprises, but also for individuals who use AI agents in everyday life.

In this guide, we’ll break down a practical playbook for AgentOps that anyone — from a business leader to a curious AI enthusiast — can apply to audit, monitor, and stay in control of their AI agents.

What is AgentOps? (And Why You Should Care)

At its core, AgentOps is about accountability.

When you delegate decisions to AI agents — whether to optimize finances, handle scheduling, or run parts of your business — you need to ensure they are:

- Reliable → Do they execute tasks correctly and consistently?

- Transparent → Can you explain why they took a certain action?

- Secure → Are they handling sensitive data responsibly?

- Controllable → Can you intervene or shut them down when needed?

AgentOps answers these questions by combining observability tools, logging, safety checks, and governance rules around AI agents.

Related read : Autonomous Decision-Making AI: The Intelligent Systems of 2025

The AgentOps Playbook: 7 Things to Monitor in Any AI Agent

Here’s a step-by-step framework you can apply today:

1. Identity & Authentication

- Ensure your AI agent has a unique identity.

- Use API tokens or OAuth instead of sharing raw credentials.

- Apply least privilege principle (agents only access what they must).

2. Data Access & Logging

- Maintain a data access log — every time the agent queries a database, pulls user info, or touches sensitive data, it must be recorded.

- Store logs in a tamper-proof system (blockchain-backed or immutable storage is ideal).

3. Decision Transparency

- Enable decision tracing: every action should be linked to an input + reasoning path.

- Use “explainability reports” where the agent outlines its decision-making logic in human-readable form.

4. Error Handling & Fail-Safes

- Set thresholds → if an agent fails 3 times in a row, it should auto-pause.

- Add a kill switch you can trigger instantly.

5. Performance Metrics

- Track: task completion rate, average runtime, error frequency.

- Define an acceptable SLA (service level agreement) for your personal or business agents.

6. Security & Privacy Controls

- Encrypt sensitive interactions.

- Regularly rotate credentials the agent uses.

- Never give full unrestricted system access.

7. Human-in-the-Loop (HITL)

- Critical decisions (finance, legal, healthcare) should always require human sign-off.

- Example: agent drafts an investment transfer → human approves before execution.

Related read : AI Safety: What Every Reader Should Know in 2025

Tools & Platforms for AgentOps (2025 Edition)

Here are some popular tools and frameworks gaining traction:

- AgentOps (open-source) → purpose-built for logging, monitoring, and debugging LLM agents.

- LangSmith (by LangChain) → observability and tracing for multi-agent workflows.

- Weights & Biases / MLflow → MLOps tools adapted for agent monitoring.

- Custom dashboards via Grafana + Prometheus → to track performance metrics.

- AutoGen & CrewAI integrations → frameworks that already support observability hooks.

Pro Tip: Even if you’re not technical, you can use simplified dashboards (like AgentOps Cloud or LangSmith hosted) to view logs, errors, and decision traces without touching code.

Related read: AI Agents Breakthroughs: 10 Powerful Real-World Examples in 2025

Step-by-Step: Setting Up AgentOps for a Personal AI Agent

Let’s walk through an example:

Scenario: You’re using an AI agent to automatically categorize business expenses and pay monthly bills.

- Provision identity → create a separate API token just for this agent.

- Enable logging → connect to AgentOps dashboard or LangSmith.

- Set guardrails → limit transaction approvals to <200RS (or any amount you think is appropriate) without human sign-off.

- Add monitoring → alerts via message/Email for unusual patterns.

- Test sandbox mode → simulate a month of expenses in test mode before going live.

- Deploy with review → human reviews first 10 transactions.

- Ongoing audit → run monthly review of logs and rotate credentials.

This simple process transforms a “black-box agent” into a trustworthy financial assistant.

Related read : Everyday Finance, Automated: How Autonomous AI Agents Can Budget, Save and Protect Your Money in 2025

Privacy & Ethical Considerations

AgentOps isn’t just about performance — it’s about responsibility.

- Bias monitoring → agents can reinforce unfair patterns; review decision logs for red flags.

- Data minimization → don’t let agents hoard unnecessary personal data.

- Ethical guidelines → align agent behavior with human values (fairness, accountability, transparency).

Related read : AI and Cognitive Thinking: An Important 2025 Guide to Staying Human

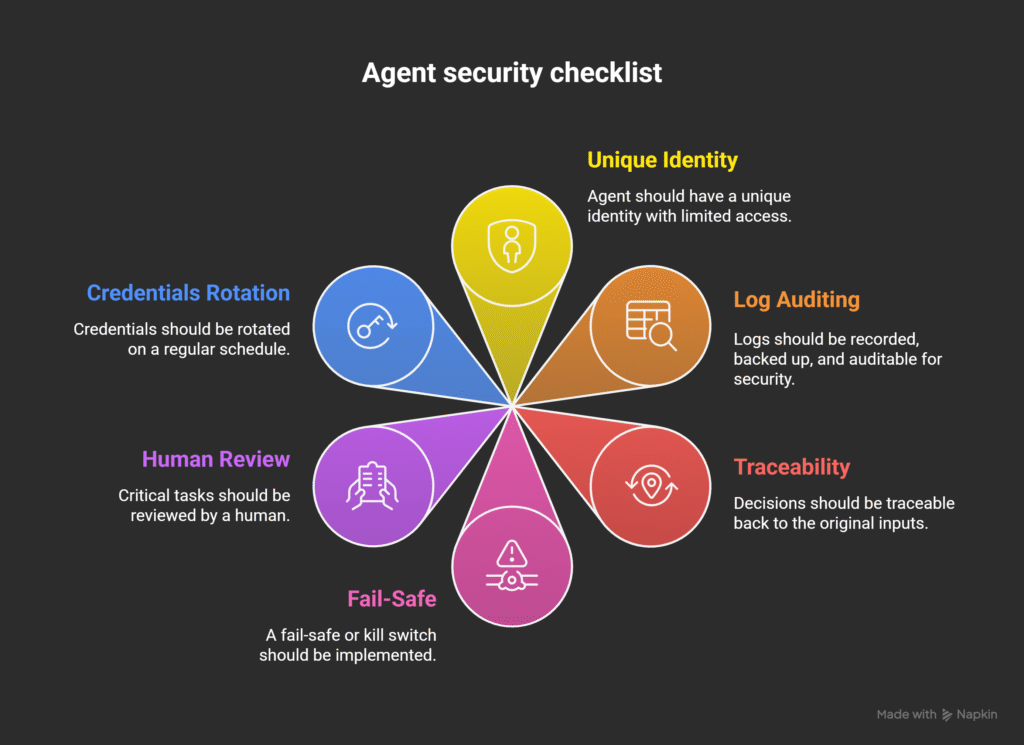

Quick Checklist: AgentOps Audit for Everyday Use

1. Does the agent have a unique identity and limited access?

2. Are logs recorded, backed up, and auditable?

3. Can you trace decisions back to inputs?

4. Is there a fail-safe/kill switch?

5. Are critical tasks human-reviewed?

6. Are credentials rotated regularly?

Run this 2-minute audit monthly to stay in control.

Frequently Asked Questions (FAQ Schema)

Q1: What is AgentOps in AI?

AgentOps is the practice of monitoring, debugging, and controlling autonomous AI agents, ensuring their actions remain safe, reliable, and transparent.

Q2: How is AgentOps different from MLOps?

MLOps focuses on models (training, deployment, monitoring). AgentOps focuses on agents — autonomous systems that use models for decision-making.

Q3: Can individuals use AgentOps, or is it just for enterprises?

Both. Enterprises need AgentOps for compliance, while individuals can use it to monitor AI assistants for finance, scheduling, and personal automation.

Q4: What’s the biggest risk if I don’t use AgentOps?

The biggest risk is loss of control — agents making incorrect, insecure, or even harmful decisions without visibility or accountability.

Q5: Which tools should beginners start with?

AgentOps (open-source) or LangSmith are beginner-friendly and provide simple dashboards to get started.

Conclusion: Stay in Control of Your AI Agents

As AI agents become more capable, trust without verification is not an option. AgentOps offers a practical way to bring visibility, accountability, and security into the world of autonomous decision-making.

Whether you’re an individual automating your finances or an organization deploying multi-agent systems, the AgentOps playbook ensures you stay in control while reaping the benefits of autonomy.

Related topics

– Applied Neuroscience + AI: How Intelligent Systems Are Rewiring Habits in 2025

– Autonomous Decision-Making AI: The Intelligent Systems of 2025

–Deepfake Dangers in 2025: How to Spot, Prevent & Stay Protected

–Shadow AI & Autonomous Decision-Making Agents in 2025: How the Invisible Minds are running Our Systems

Pingback: AI for Mental Wellness: How Intelligent Systems Are Becoming Digital Therapists in 2025 - ScienceThoughts

Pingback: Applied Neuroscience + AI: How Intelligent Systems Are Rewiring Habits in 2025 - ScienceThoughts

Pingback: Autonomous Decision-Making AI: The Intelligent Systems of 2025 - ScienceThoughts

Pingback: Deepfake Dangers in 2025: How to Spot, Prevent & Stay Protected - ScienceThoughts