Deepfake Dangers in 2025: How to Spot, Prevent & Stay Protected

“With great power comes great responsibility.” We’ve all heard this phrase, but when it comes to artificial intelligence, the story changes. AI has immense power—but it cannot be responsible on its own. That responsibility falls on us.

Unfortunately, in 2025, reality shows that AI’s powers are often misused. Beyond powering virtual assistants or automating routine tasks, AI now creates convincing illusions that blur the line between truth and fabrication. Deepfakes—AI-generated videos, voices, and images that mimic real people—have moved from niche internet experiments to mainstream tools.

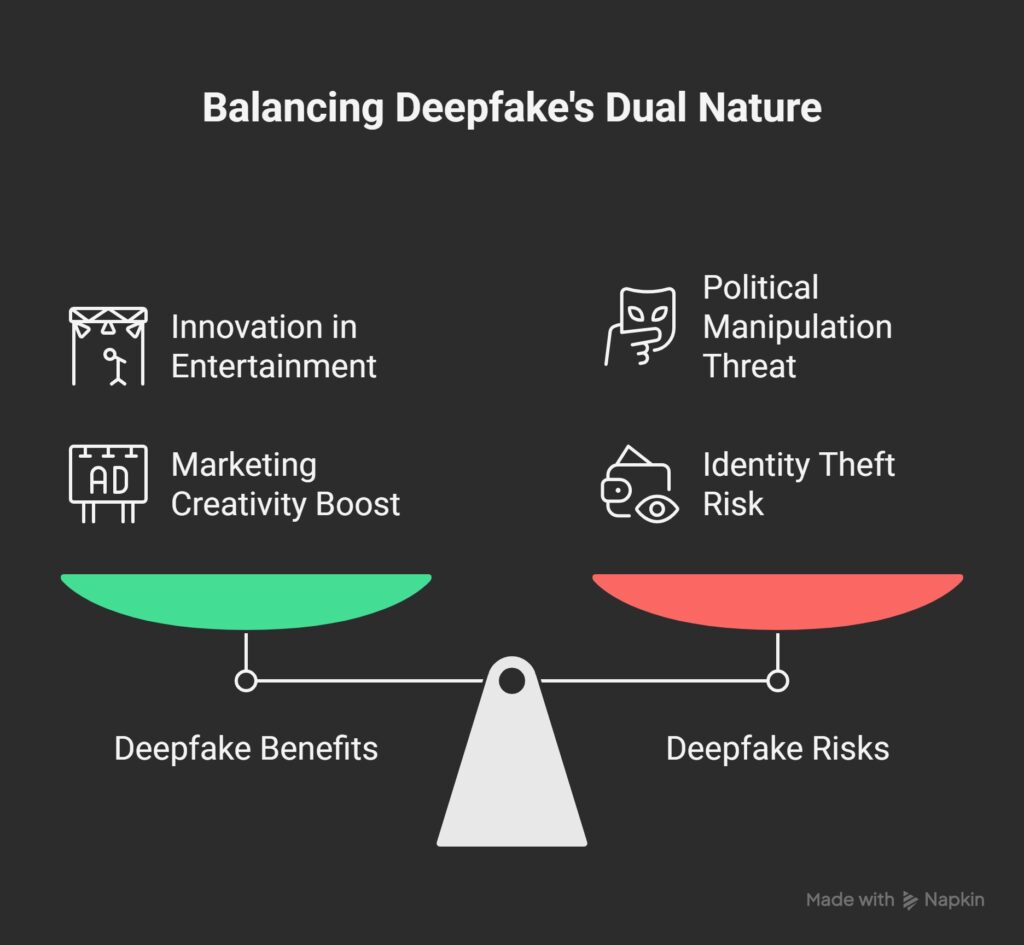

On one side, deepfakes bring innovation in entertainment and marketing. On the other, they pose serious threats, fueling scams, political manipulation, and identity theft. The rise of deepfakes marks a turning point in our digital lives—and staying aware is the first step to protecting ourselves.

A recent cybersecurity report revealed that deepfake-driven fraud attempts have increased by 300% since 2023, and experts warn the curve is only rising. With accessible AI tools now available to anyone with a smartphone, the dangers of deepfakes in 2025 are more pressing than ever.

This blog explores the growing risks, how to detect fake media, what legal frameworks exist, and practical steps you can take to stay safe.

Read more about AI safety here

What Are Deepfakes & Why They’re Exploding in 2025

Deepfakes are created using deep learning algorithms, primarily Generative Adversarial Networks (GANs), which pit two neural networks against each other: one generating content, the other judging if it looks real. Over repeated cycles, the system learns to produce hyper-realistic images, videos, or voices.

In 2025, deepfake technology has become:

- Cheaper: Open-source libraries and mobile apps allow anyone to generate convincing deepfakes.

- Faster: What once required hours of GPU rendering can now be done in minutes.

- Accessible: Tools that were once restricted to AI labs are now available on consumer devices.

The result? Widespread adoption—both for harmless fun and malicious intent.

Read how to protect seniors from AI scams here

The Real Dangers of Deepfakes

1. Identity Theft & Scams

One of the fastest-growing threats comes from scammers using AI to impersonate people. Imagine receiving a video call from your “boss” asking you to urgently transfer money, or a voice message from a family member requesting financial help. In 2025, these scams are not only possible—they’re happening.

2. Political Manipulation & Misinformation

As elections approach in countries around the world, deepfakes are being weaponized to spread fake speeches, manipulate public opinion, and erode trust in institutions. A single fabricated video can trigger mass confusion before fact-checkers catch up.

3. Emotional & Psychological Impact

Victims of non-consensual deepfakes, particularly in the context of explicit content, suffer long-lasting trauma. Even if proven fake, the damage to reputation and mental health is hard to reverse.

4. Corporate Fraud & Cybersecurity Risks

Businesses are facing risks too. Fraudsters are impersonating CEOs to trick employees into transferring funds or disclosing sensitive information. In some cases, deepfake-generated identities are being used to bypass biometric authentication systems.

Read more about AI and our mind here

How to Spot a Deepfake

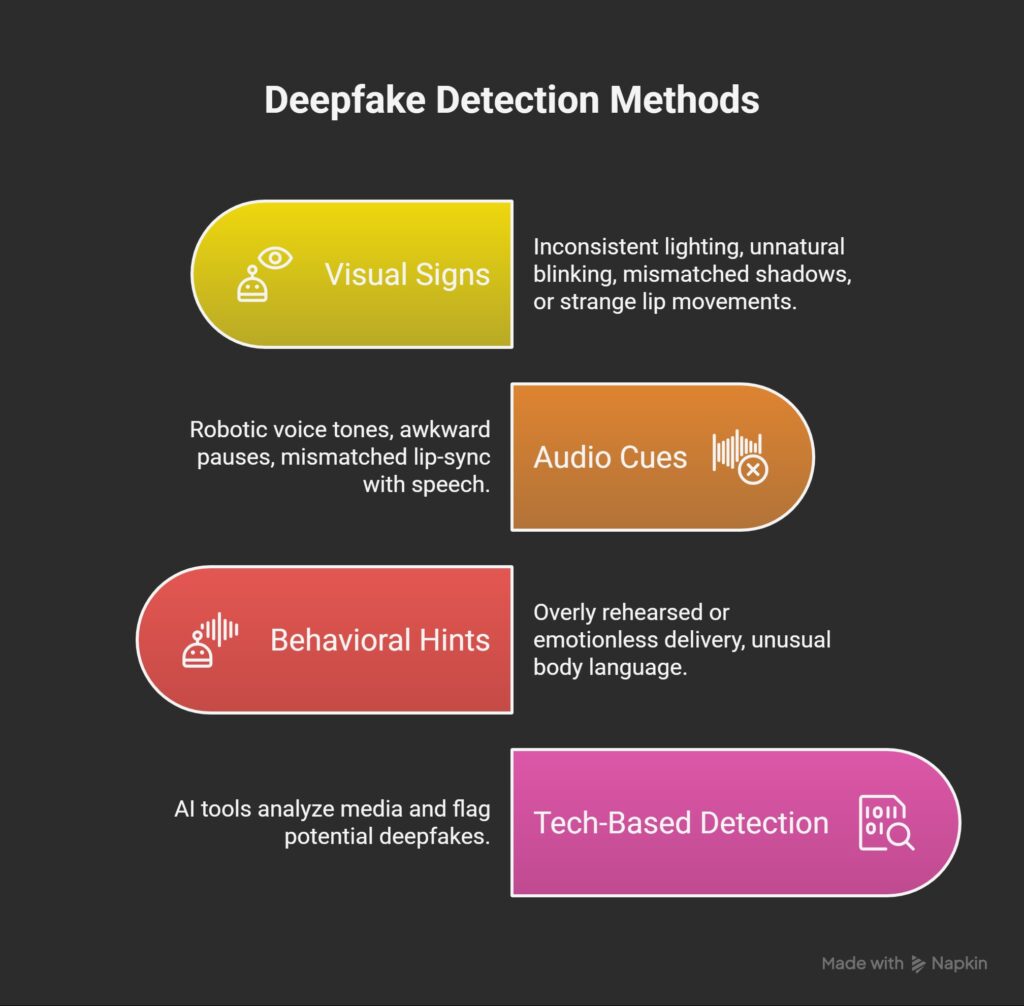

While detection tools are improving, individuals can still rely on human observation. Here’s what to look for:

- Visual Signs: Inconsistent lighting, unnatural blinking, mismatched shadows, or strange lip movements.

- Audio Cues: Robotic voice tones, awkward pauses, mismatched lip-sync with speech.

- Behavioral Hints: Overly rehearsed or emotionless delivery, unusual body language.

Tech-Based Detection: In 2025, multiple AI-powered tools can analyze media and flag potential deepfakes. Browser extensions, mobile apps, and even built-in features in messaging platforms now offer quick checks.

Still, awareness is key. The first line of defense is skepticism—don’t trust digital content blindly, even if it looks real.

Deepfake Scams in India & Worldwide

India has witnessed a surge in deepfake-driven scams. In recent cases:

- Fraudsters used AI-generated voices of company executives to authorize fake fund transfers.

- Political speeches were fabricated to stir controversy.

- Senior citizens were targeted with deepfake “grandchildren” asking for money.

Globally, banks, law firms, and even governments are grappling with the same challenges. In Europe, an energy company lost millions due to a deepfake phone call. In the U.S., fake celebrity endorsements continue to dupe consumers into scams. The trend is clear: deepfakes are not only a cybersecurity issue—they are a societal threat.

Legal Landscape in 2025

As the dangers rise, governments are scrambling to respond.

- India: While the IT Act and Data Protection laws touch on digital fraud, explicit deepfake regulations are still evolving. Some states have proposed penalties for creating or sharing harmful deepfakes.

- European Union: The EU AI Act includes strict rules on synthetic media disclosure, requiring labels on AI-generated content.

- United States: Several states have passed laws banning non-consensual deepfakes, especially in elections and explicit content.

- China: Introduced mandatory watermarking for AI-generated media.

Despite progress, the global legal framework is fragmented. A unified approach is needed to combat cross-border misuse.

Tools & Techniques to Protect Yourself

- Use Deepfake Detection Apps: Tools like Deepware, Reality Defender, and Microsoft Video Authenticator are increasingly reliable.

- Check Source Authenticity: Verify the origin of videos, especially those received via messaging apps or social media.

- Enable Multi-Factor Authentication: Don’t rely solely on biometric systems; add PINs or device-based verification.

- Adopt Cyber Hygiene: Be cautious with urgent requests for money, sensitive data, or political content.

- Stay Updated: Follow cybersecurity advisories and enable automatic updates on your apps and browsers.

Future Outlook: Can We Win Against Deepfakes?

The fight against deepfakes is essentially AI vs AI. For every advancement in synthetic media generation, new detection algorithms are being developed. Yet, the arms race is ongoing.

Looking ahead:

- AI watermarking may become a global standard.

- Social media platforms will integrate mandatory verification for high-impact content.

- Public awareness campaigns will play a crucial role in building “digital literacy.”

Ultimately, technology alone cannot solve the problem. Society must combine law, education, and AI-powered tools to defend against this threat.

Conclusion

Deepfakes in 2025 are no longer experimental tricks—they’re a real-world menace shaping politics, finance, and everyday interactions. From scams and identity theft to misinformation and trauma, the dangers are multiplying.

But with vigilance, legal safeguards, and AI-powered detection, individuals and organizations can fight back. The key is awareness: question what you see, verify sources, and use the tools available. The line between real and fake may be thinner than ever, but with the right knowledge, you can stay one step ahead.

- Related articles

- AgentOps in 2025: The Complete Playbook to Monitor and Safely Control AI Agents

- Everyday Finance, Automated: How Autonomous AI Agents Can Budget, Save and Protect Your Money in 2025

- AI for Mental Wellness: How Intelligent Systems Are Becoming Digital Therapists in 2025

- Autonomous Decision-Making AI: The Intelligent Systems of 2025

- Shadow AI & Autonomous Decision-Making Agents in 2025: How the Invisible Minds are running Our Systems

Pingback: AI for Mental Wellness: How Intelligent Systems Are Becoming Digital Therapists in 2025 - ScienceThoughts