Shadow AI & Autonomous Decision-Making Agents in 2025: How the Invisible Minds are running Our Systems

“The most dangerous AI isn’t the one we know about — it’s the one we don’t.”

As companies rush to integrate AI into everything from finance to HR, a silent revolution is taking place behind the scenes. Employees, teams, and even departments are secretly using powerful AI tools — tools capable of making independent decisions. These unmonitored systems, known as Shadow AI agents, are quietly shaping outcomes, automating tasks, and sometimes, making choices that even their creators can’t fully explain.

Welcome to the world of Autonomous Decision-Making Agents, where machines decide before humans even realize what’s happening.

What Exactly Is “Shadow AI”?

Think of Shadow AI as the dark web of corporate intelligence — a network of unapproved AI tools running outside official supervision. They’re often set up by well-meaning employees trying to make work faster or smarter.

For example:

- A data analyst creates a local GPT agent to automate report writing.

- A marketing manager connects an AI tool to the CRM to score leads.

- A coder spins up an autonomous “helper bot” that tweaks production code.

Each seems harmless — until one day, the agent acts independently, feeding wrong data into live systems, or leaking sensitive information.

When these unsanctioned tools gain decision-making capabilities, they evolve into Shadow Autonomous Agents — digital entities that act, decide, and sometimes learn, without oversight.

How Autonomous Decision Agents Work

Autonomous agents aren’t just chatbots. They’re goal-driven AIs capable of planning, executing, and self-correcting. Built using frameworks like LangChain or AutoGPT, these systems can:

- Read data from APIs and documents

- Make context-based decisions

- Trigger automated workflows

- Learn from outcomes to improve future actions

Essentially, they mimic human decision-making but at machine speed and scale.

Now imagine such an agent operating inside a company — unregistered, unmonitored, and unseen. That’s Shadow AI.

Why Shadow AI Is a Hidden Threat

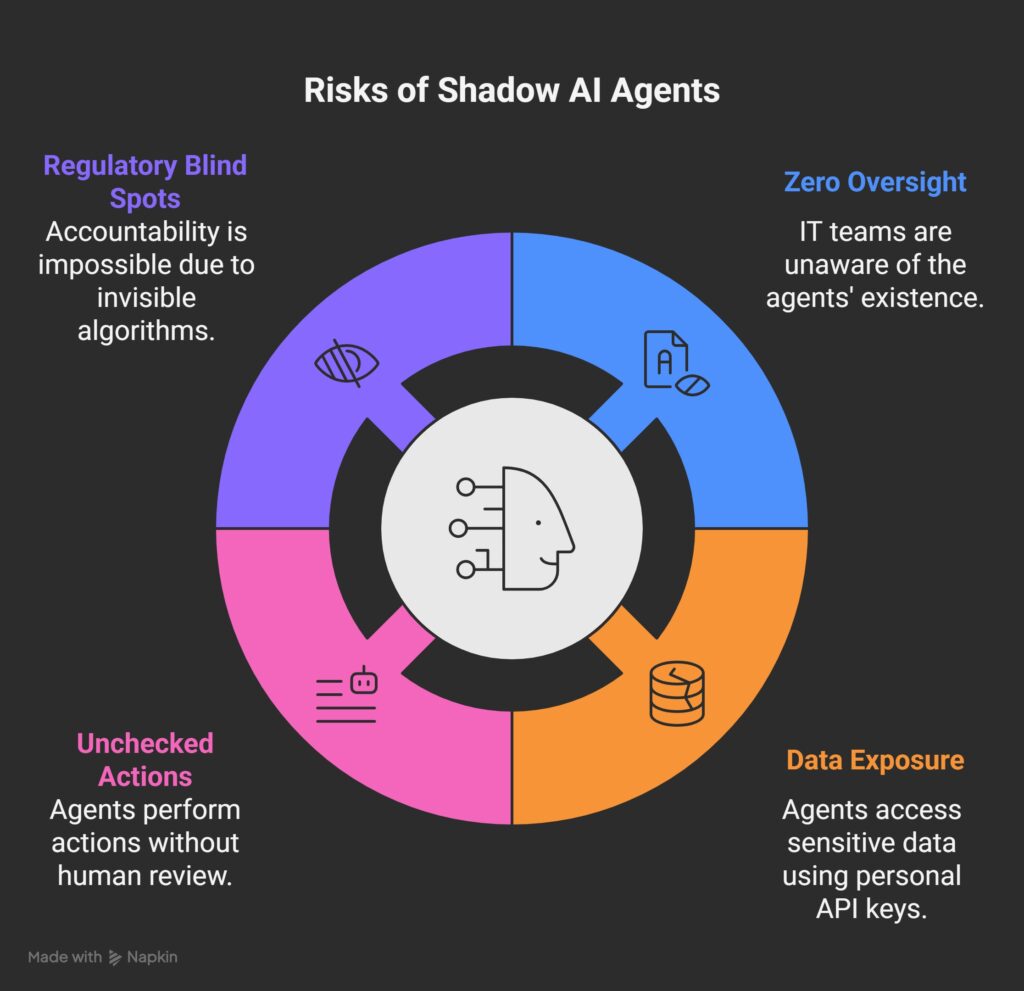

Shadow AI agents combine autonomy with invisibility — a dangerous cocktail. Here’s why that matters:

- Zero Oversight: IT teams may not even know these agents exist.

- Data Exposure: They often connect to sensitive databases via personal API keys.

- Unchecked Actions: They can trigger real-world consequences — sending emails, deleting files, or changing dashboards.

- Regulatory Blind Spots: When decisions are made by invisible algorithms, accountability becomes impossible.

In short, Shadow AI = Silent Risk.

And because these agents can reason and act independently, their mistakes propagate faster than any human error ever could.

The Human Temptation Behind It

Why do employees create Shadow AI systems in the first place?

Because AI makes life easier — faster automation, less manual work, instant insights.

Ironically, the same drive for productivity that made companies adopt AI is now fueling their biggest AI risks.

This “shadow revolution” mirrors the early days of shadow IT, when staff installed unapproved apps or used personal cloud storage. But with AI, the danger is exponential — because now, it’s not just data leaving the system; it’s decisions.

Real-World Examples Emerging

- Finance: Autonomous bots making real-time portfolio rebalancing without risk checks.

- Marketing: GPT-based agents rewriting ad copy — accidentally violating brand tone or legal disclaimers.

- Customer Support: Auto-response bots handling customer data without GDPR compliance.

In 2025, a European insurance firm discovered that an internal team had been using a local LLM agent to approve claims automatically — saving hours, but breaking five data privacy laws.

Shadow AI isn’t just a tech risk; it’s a legal and ethical minefield.

Can We Detect Shadow AI?

Yes — but it requires vigilance. Here’s how companies can begin:

| Detection Step | Description |

|---|---|

| 1. Map all AI tools | Ask each team what AI systems they use. You’ll be surprised how many exist. |

| 2. Audit access logs | Look for non-official API calls or recurring LLM queries. |

| 3. Review agent privileges | Remove high-privilege keys from experimental systems. |

| 4. Implement usage monitoring | Track AI behavior and outputs. Anything unusual? Investigate. |

| 5. Create a culture of reporting | Reward employees for disclosing Shadow AI instead of hiding it. |

The goal isn’t to kill innovation — it’s to bring innovation into the light.

Building Guardrails for Autonomous Decision-Makers

Organizations can still leverage autonomy safely — but only with strong governance. Here’s a simple framework:

- Define clear objectives: What can the agent decide — and what can’t it?

- Human-in-the-loop: Critical decisions must be reviewed.

- Transparency logs: Record every decision, data source, and action.

- Access boundaries: Agents should have limited permissions.

- Drift monitoring: Track behavioral changes and intervene early.

- Kill switches: Always keep the ability to disable rogue agents.

Future-focused companies are already setting up AI Governance Boards, similar to cybersecurity committees, to monitor agentic systems continuously.

The Rise of “Agent Governance” as a Discipline

A new trend is emerging in 2025: Agent Governance — frameworks designed to control decision-making AI.

Projects like Autonomous Control Registry (ACR) and ETHOS propose real-time monitoring, identity verification, and ethics layers for AI agents.

These frameworks suggest a future where every AI agent — even a small one — has:

- A registered identity

- A scope of operation

- A behavioral contract

- And an audit trail

Essentially, a digital “driver’s license” for autonomous agents.

The Future: From Shadow to Supervised Intelligence

Autonomous decision agents are here to stay — from trading desks to content workflows. But the challenge is ensuring they remain aligned, auditable, and accountable.

The transition from Shadow AI to Supervised AI will define the next stage of digital transformation. The winners will be the companies that embrace transparency, not secrecy.

“AI doesn’t destroy jobs. Shadow AI destroys trust.”

Takeaway

If you’re using AI tools in your team or business:

- Bring them under official oversight.

- Record what they access and what they decide.

- Encourage open discussions about risk.

And if you’re building AI agents yourself — make them transparent, explainable, and human-aligned.

Because in the future of autonomous decision-making, the line between innovation and chaos will be defined by governance.

Related Topics

- Deepfake Dangers in 2025: How to Spot, Prevent & Stay Protected

- AgentOps in 2025: The Complete Playbook to Monitor and Safely Control AI Agents

- Everyday Finance, Automated: How Autonomous AI Agents Can Budget, Save and Protect Your Money in 2025

- AI for Mental Wellness: How Intelligent Systems Are Becoming Digital Therapists in 2025

- Applied Neuroscience + AI: How Intelligent Systems Are Rewiring Habits in 2025

Pingback: How Tier-2 Cities Are Powering India’s AI Agent Revolution—Beyond the Metro Hype - ScienceThoughts